SigmaMind AI x Deepgram: Real-Time Speech-to-Text for Scalable Voice AI

SigmaMind AI partners with Deepgram to power real-time speech-to-text for voice AI agents. Learn how low-latency transcription enables faster responses, better interruption handling, and scalable performance.

.png)

Voice AI only works if every part of the pipeline works. The LLM, the tool calls, the text-to-speech - they all depend on one thing happening first: converting raw audio into text your system can reason over. Get that step wrong, and the cost compounds in every direction.

Today, SigmaMind AI is announcing our official partnership with Deepgram, the real-time speech-to-text engine powering our voice agent platform. With over a million calls per month flowing through our infrastructure, this is the story of the decision that made production scale actually possible - and the results that followed.

What Is Real-Time Speech-to-Text in Voice AI?

Real-time speech-to-text (STT) converts live audio into text instantly, enabling voice AI systems to:

- understand user intent mid-sentence

- trigger actions before the speaker finishes

- reduce latency in responses

- improve conversation flow

This is critical for production-grade voice AI systems where delays break user experience.

Why Speech-to-Text Is the Most Critical Layer in Voice AI Infrastructure

When we evaluated STT providers, we weren't benchmarking clean audio in a quiet room. We were stress-testing against real production conditions: callers who interrupt mid-sentence, telephony-compressed audio, domain-specific vocabulary, and traffic spikes that double peak concurrency without warning.

The capability that kept rising to the top wasn't final transcript accuracy - though that matters. It was the quality and speed of interim transcripts: partial results that your system can start acting on before the speaker has finished their sentence. That distinction changes everything about how a voice agent feels to the person on the other end of a call.

"When we began acting on interim transcripts and combined that with word timestamps, the agent could trigger API calls and follow-ups mid-utterance. That shift unlocked much richer, multi-step voice workflows."

- Pratik Mundra, Co-founder, SigmaMind AI

Deepgram's streaming API returns interim results fast enough that our orchestration engine begins spinning up LLM context, pre-fetching likely tool inputs, and staging a response before the utterance ends. By the time the speaker finishes, we're already moving. That's what sub-one-second voice-to-voice latency looks like in practice - including telephony overhead.

Why SigmaMind AI Chose Deepgram for Real-Time Speech-to-Text

Beyond interim transcripts, several capabilities proved essential once we were in production at scale:

Native Opus and PCM support - our audio pipeline runs on LiveKit over WebRTC. Deepgram handles both formats natively, removing a transcoding step that was adding latency and CPU overhead we didn't need.

Word-level timestamps - precise alignment between transcript segments and audio tracks enables speaker attribution, time-accurate tool triggering, and the post-call analytics our enterprise customers depend on.

Custom vocabulary and keyterm prompting - better recognition of product names, CRM identifiers, and domain-specific terms without post-processing workarounds.

Enterprise security - TLS encryption, token-based auth, and configurable data retention that meets compliance requirements in financial services, healthcare, and insurance.

Stable performance at 150+ concurrent sessions - no degradation under the load profiles our largest customers run.

We currently deploy Deepgram's Nova-3 and Flux models, with model selection optimized internally based on streaming latency, endpointing reliability, and accuracy for tool-triggering phrases. The abstraction is invisible to end users - they just notice the agent keeps up.

What this means for businesses running on SigmaMind

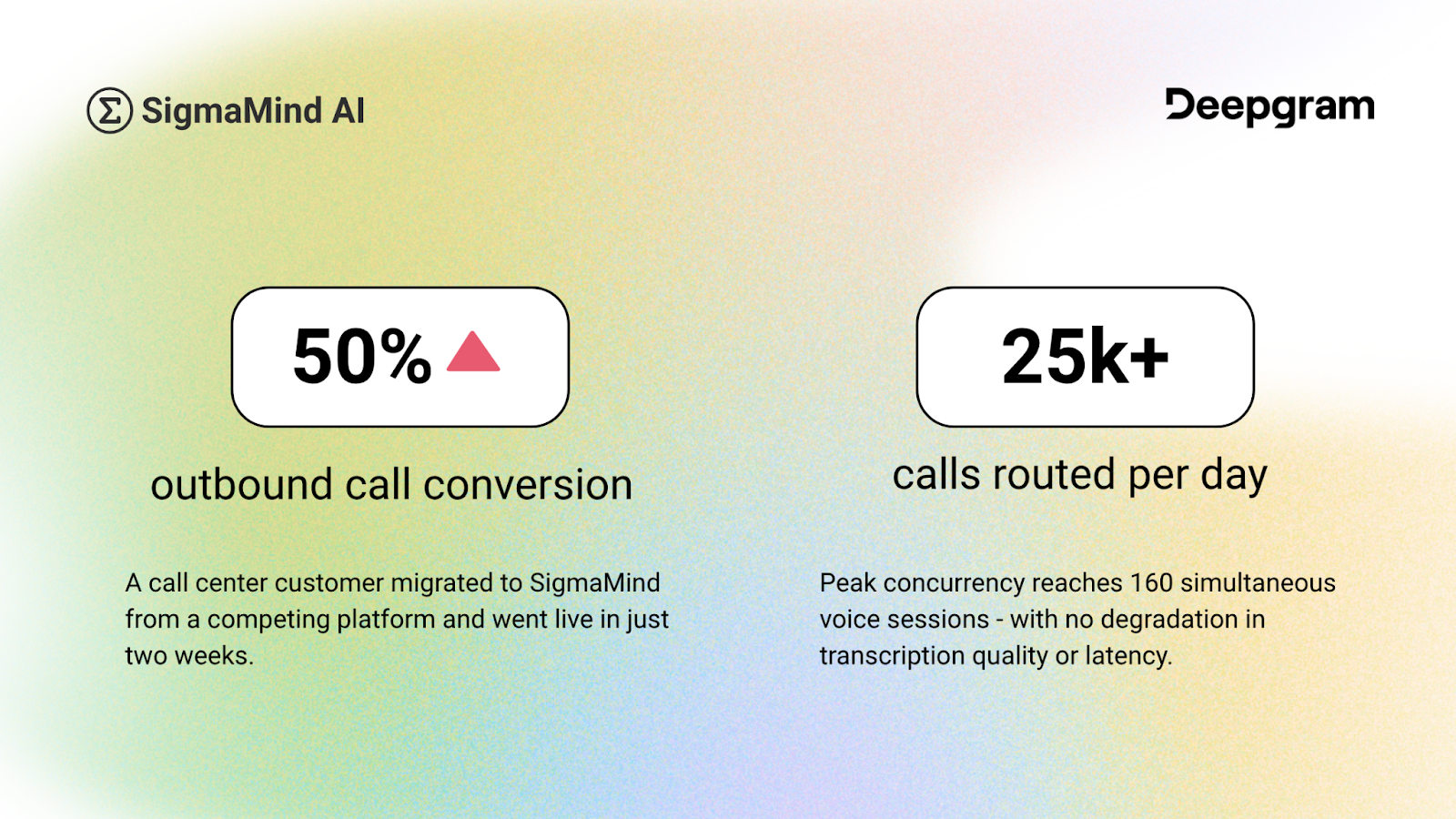

The best proof of an infrastructure decision isn't a benchmark - it's what happens when a real customer runs real calls at real volume.

During a live enterprise demo, a user repeatedly interrupted the agent mid-conversation. The agent handled each interruption correctly and completed the workflow without restarting. That's not a demo trick. It's what accurate, low-latency interim transcription makes possible in a well-built pipeline.

What's next

We're expecting call volume to grow 10x over the next six months. On our near-term roadmap: batch transcription for post-call QA workflows, deeper CRM and scheduling integrations for regulated industries, MCP server support for developer tooling, and continued work on interruption handling and barge-in detection.

The Deepgram partnership is central to all of it. Voice AI at production scale requires more than accurate models - it requires reliable, observable infrastructure that holds up when volume spikes and conversations get messy. That's the foundation we're building on together.

Read the full case study for the complete technical breakdown and customer results.

FAQ: Speech-to-Text in Voice AI

What is speech-to-text in voice AI?

It converts audio into text so AI systems can understand and respond.

Why are interim transcripts important?

They allow systems to act before the speaker finishes, reducing latency.

How does Deepgram improve voice AI performance?

By providing fast streaming transcription, accurate timestamps, and scalable infrastructure.

What is low-latency voice AI?

Voice systems that respond in near real time (sub-second).