12 Best Multilingual TTS Engines (2026) for Voice Agents

See the 12 best Multilingual TTS engines for 2026 voice agents—real-time picks, cost math, latency targets, and routing tips. Compare and choose now.

TL;DR

No single multilingual TTS engine wins across every language, use case, and budget. The smartest approach in 2026 is to pick two engines per target language and route dynamically based on quality, cost, and latency. Pricing ranges wildly, from roughly $7 per million characters to over $100, and quality drops sharply outside Tier-1 languages like English, Spanish, and Mandarin. This guide covers 12 options (cloud APIs, boutique specialists, and open-source models), with honest cost math, code-switching pitfalls, and a framework for choosing what actually works in production.

Why Picking One Multilingual TTS Engine Is a Losing Strategy

The multilingual text-to-speech market in 2026 looks nothing like it did two years ago. Open-source models now run on consumer hardware. Boutique vendors have pushed naturalness past what hyperscalers offer in major languages. And pricing has split into three distinct bands that can make or break your unit economics at scale.

But here is the uncomfortable truth that most vendor comparison pages skip: languages come in tiers. Tier-1 languages (English, Spanish, French, German, Mandarin, Japanese, Korean) sound great across most engines. Tier-2 languages (Arabic, Hindi, Turkish, Dutch, Russian) are good but uneven. Niche languages often regress to accented, unnatural output. The demos vendors play on their websites are recorded in quiet studios. Production calls happen over phone lines with background noise, compression artifacts, and impatient listeners.

That gap between demo quality and phone-line reality is where most multilingual TTS deployments break down. Practitioners on Reddit consistently report that “multilingual quality is uneven, and stress patterns in Italian and code-switching expose weaknesses many demos hide.” The solution is not to find a perfect engine. It is to build an orchestration layer that lets you mix engines per locale, swap vendors as quality shifts, and measure everything.

This is exactly what platforms like SigmaMind AI are built for: model-agnostic orchestration across TTS providers like ElevenLabs, Rime, and Cartesia, with production telemetry so you can track cost and latency per layer and per language.

At-a-Glance Comparison Table

| Engine | Best For | Language Tier | Streaming/TTFA | Voice Cloning | Pricing Band (per 1M chars) | Practitioner Highlight |

|---|---|---|---|---|---|---|

| ElevenLabs v3 | Creator-grade naturalness | Tier-1 strong, Tier-2 good | Yes / fast | Instant + Professional | Premium ($100+) | “Premium quality but expensive once you scale” |

| Cartesia Sonic-3 | Low-latency voice agents | 40+ languages reported | Sub-second focus | Limited | Mid ($30–40) | “600+ voices and emotion control tags” |

| Rime Arcana v3 | Bilingual EN-ES realism | Bilingual + multilingual models | Sub-500ms target | Yes | Mid ($30–40) | “Conversational micro-prosody stands out” |

| Deepgram Aura-2 | Single-vendor STT+TTS stack | Growing (recently added 5 langs) | Sub-200ms claimed | No | Mid ($30–40) | “Decent streaming but can be choppy” |

| Google Cloud TTS | Broadest locale coverage | 80+ locales | Yes / varies | Custom Voice | Mid ($30–40) | “Solid quality; config quirks per locale” |

| Azure Neural TTS | Enterprise catalog + compliance | Largest hyperscaler catalog | Yes / varies | Custom Neural Voice | Mid ($30–40) | “Good for English; Hindi behind boutiques” |

| Amazon Polly | AWS ecosystem stability | Broad, expanding Generative | Yes / standard | No | Budget–Mid ($7–30) | “Reliable but dated vs. boutique leaders” |

| PlayHT 2.x | Expressive cloning for creators | Good breadth | Yes | Strong | Mid–Premium ($39+/mo plans) | “Great realism; occasional reliability hiccups” |

| Resemble AI | Regulated enterprise cloning | Good, not market-leading | Yes | Enterprise-grade | Mid (credit bundles) | “Less AI-sounding timbre; solid support” |

| Kokoro TTS | Lightweight on-device | Multilingual packs available | Real-time local | Community add-ons | Free (your infra) | “Sweet spot for local, but Chinese prosody robotic” |

| Coqui XTTS-v2 | Zero-shot multilingual cloning | Good for supported langs | Near real-time | Zero-shot | Free (your infra) | “Accent bleed when forcing unsupported languages” |

| Fish/Qwen/Voxtral/TADA | Bleeding-edge open multilingual | Varies (5–9+ languages) | Varies | Model-dependent | Free (your infra) | “Voxtral runs on ~3GB VRAM with 9 languages” |

Pricing as of April 2026. Verify vendor rate cards before committing. Ranges from third-party aggregators.

The LACES Framework: How to Evaluate Multilingual TTS

Before comparing engines, get clear on what you actually need. Use this five-point framework:

Languages and dialects you truly need. Not how many an engine supports, but whether it handles your specific locales. Mexican Spanish and Castilian Spanish are not the same. Gulf Arabic and Levantine Arabic sound very different. And code-switching (mixing languages mid-sentence, like Hindi-English) still trips most engines.

Accent realism judged by native listeners. Word error rate and MOS scores from benchmarks tell you something, but not enough. Run blind tests with native speakers using your actual domain text: names, addresses, product SKUs, appointment confirmations.

Control. SSML support, prosody tags, emotion markers, phoneme dictionaries. These matter enormously for getting numbers, dates, and honorifics right across languages.

Economics. Price per million characters is the starting point, but factor in streaming costs, cloning fees, and volume tiers. One practitioner on Reddit reported cutting monthly speech API spend from $312 to $41 by switching providers and optimizing usage.

Speed. Time-to-first-audio (TTFA) and end-to-end turn time in real phone conditions, not just API benchmarks in ideal networks.

You can test all of this with actual call flows using tools like the SigmaMind Playground, which provides node-level logs so you can isolate TTS latency from other parts of your voice agent stack.

Production Voice Agent Picks

These four engines are built for or commonly used in real-time conversational AI, where latency and streaming reliability matter as much as naturalness.

1. Cartesia Sonic-3

Best for: Real-time voice agents needing very low TTFA and rich emotion control.

Pricing: Usage-based with paid tiers; falls in the mid pricing band (~$30–40/1M chars). Confirm current rates on their pricing page.

Key features:

- Sub-second streaming architecture designed for conversational AI

- SSML emotion tags for controlling tone mid-utterance

- Community reports of 40+ languages and 600+ voices

- Widely integrated across voice agent platforms

Tradeoffs:

- Pricing details shift between tiers; confirm rate cards before committing to scale

- Less established voice cloning compared to ElevenLabs or Resemble

- Documentation for edge-case SSML behaviors still catching up

User perspective: Home Assistant and developer community members praise the voice catalog breadth and emotion control features. Integration threads show active adoption for smart home and agent use cases.

2. Rime AI (Arcana v3 / Mist)

Best for: Production conversational TTS tuned for bilingual English-Spanish realism and enterprise CX.

Pricing: Usage-based; mid pricing band. CLI and docs confirm language parameters with ISO code handling.

Key features:

- Bilingual EN-ES low-latency model plus a broader multilingual model

- Emphasis on conversational micro-prosody (breaths, emphasis, natural pauses)

- Sub-500ms TTFA target for the bilingual model

- Strong focus on enterprise voice agent deployments

Tradeoffs:

- The fast bilingual model covers fewer languages than the multilingual model, which trades some speed

- Verify code-switching behavior in your specific locales before committing

- Independent benchmark comparisons are still catching up to vendor claims

User perspective: Partner demos show preference over some competing models, but treat directional marketing carefully until third-party tests validate the claims across your target languages.

3. Deepgram Aura-2

Best for: Teams already using Deepgram for speech-to-text who want a single-vendor voice stack.

Pricing: Mid-tier in aggregator comparisons. The value proposition centers on keeping your STT and TTS with one provider.

Key features:

- Recently added Dutch, French, German, Italian, and Japanese to an expanding language set

- Enterprise infrastructure with sub-200ms TTFA in some independent tests

- Tight integration with Deepgram’s STT products

Tradeoffs:

- Language coverage is still growing and trails hyperscalers and boutique leaders

- Limited public benchmarks versus top-tier engines

- Practitioners on Reddit report that streaming “is decent but can be choppy” in some releases, particularly in telephony loops

User perspective: Developers building voice agents appreciate the single-vendor simplicity but recommend testing extensively in your actual telephony environment before assuming demo-quality performance.

4. Microsoft Azure AI Speech (Neural TTS)

Best for: The largest voice catalog among hyperscalers, with enterprise compliance controls and SSML styles.

Pricing: Per-character with Neural and Custom tiers. Typical hyperscaler pricing in the mid band.

Key features:

- Frequently cited as the largest catalog across languages and voices

- Custom Neural Voice for brand-specific synthesis

- Rich SSML style support (newscast, cheerful, empathetic, etc.)

- Enterprise policy, compliance, and data residency options

Tradeoffs:

- Quality varies significantly by language; developer posts note “good for English; Hindi quality behind ElevenLabs for some use cases”

- Console and pricing complexity typical of hyperscaler platforms

- Updates to the voice catalog don’t always land evenly across regions

User perspective: Enterprise teams value the compliance posture and integration with Azure ecosystem, but teams targeting South Asian or Middle Eastern languages should run native-listener evaluations rather than relying on catalog breadth alone.

Creator and Brand Voice Picks

These engines prioritize voice cloning, expressiveness, and studio-quality output. They work well for content production, dubbing, and brand voice applications, though they can also power voice agents at higher cost.

1. ElevenLabs (Multilingual v2/v3)

Best for: Creators and brands needing top-tier naturalness across major languages with a vast voice library.

Pricing: Premium band ($100+/1M chars at API scale). Plans range from Free to Starter (~$5/mo) to Creator (~$22/mo) to Pro/Business with per-character overages. Third-party breakdowns show that costs escalate quickly at volume.

Key features:

- Consistently rated among the most natural-sounding multilingual TTS engines

- Instant and Professional voice cloning

- Large stock voice library with streaming support

- Active model updates (v2 to v3 quality improvements noted by users)

Tradeoffs:

- Cost is the primary pain point at scale; users on Reddit frequently flag pricing as the deciding factor

- Plan and credit complexity can lead to surprise overages

- Some reports of support and UI friction during billing disputes

User perspective: The consensus across forums is clear: ElevenLabs sounds the best in Tier-1 languages but costs the most. One Reddit thread captured the sentiment perfectly, with a user noting that “pricing matters more than tiny quality differences once you scale your SaaS.”

2. PlayHT 2.x

Best for: Creators needing strong cloning and expressive voices with good multilingual breadth.

Pricing: Aggregators list plans starting around $39/mo; verify for API versus studio tiers. Falls in the mid-to-premium range depending on usage.

Key features:

- Strong voice cloning capabilities

- Expressive, emotion-rich synthesis

- Good multilingual language coverage

- API and studio interface options

Tradeoffs:

- Reviews note occasional robotic tone in certain non-English languages

- Community reports flag mixed uptime and reliability during past periods

- Long-script handling can introduce artifacts; test with your actual content length

User perspective: Independent reviews praise the realism but recommend testing stability with production workloads before committing.

3. Resemble AI

Best for: Enterprise cloning workflows with brand safety features and deepfake detection tooling in regulated markets.

Pricing: Tiered with credit bundles; multiple third-party trackers maintain updates. Mid pricing band.

Key features:

- Enterprise-grade voice cloning with consent management

- Deepfake detection tooling (useful for regulated industries)

- Brand safety and watermarking features

- Decent multilingual coverage

Tradeoffs:

- Multilingual breadth is good but not market-leading

- Confirm voice rights and consent flows match your jurisdiction’s requirements

- Less community momentum compared to ElevenLabs or open-source options

User perspective: Trustpilot reviews highlight responsive customer service and a “less AI-sounding” timbre for some voices, though overall ratings are mixed.

Hyperscaler Picks

For teams that need maximum language breadth, enterprise SLAs, and tight cloud platform integration.

1. Google Cloud Text-to-Speech (Gemini TTS / WaveNet)

Best for: Broadest locale coverage among any single provider, with tight GCP integration.

Pricing: Pay-as-you-go per million characters. Mid pricing band. Editorial coverage cites 80+ locales as of 2026.

Key features:

- 80+ locales, the widest single-provider coverage available

- Strong SSML support across languages

- Gemini TTS now accessible via Cloud TTS APIs with updated voice sets

- Enterprise policies, data residency, and compliance controls

Tradeoffs:

- Some locales have limited or intermittently available voices

- IAM and console confusion appears in community posts, particularly after migrations

- Voice quality in Tier-2 and Tier-3 languages trails boutique specialists

User perspective: G2 reviewers describe solid quality and ease for multilingual narration workflows, but note configuration quirks when working across many locales simultaneously.

2. Amazon Polly (Including Generative TTS)

Best for: Stability, AWS integration, and mature SSML support across a broad language set.

Pricing: Per-character with Standard and Neural tiers. Budget-to-mid band ($7–30/1M chars depending on voice type). Generative TTS expanded in late 2025.

Key features:

- Broad language and voice list with SSML across locales

- Generative TTS engine for improved naturalness

- Deep AWS ecosystem integration (Lambda, Connect, Lex)

- Predictable, well-documented pricing

Tradeoffs:

- Realism trails boutique leaders in many non-English locales

- G2 reviews trend toward “reliable but dated sounding” compared to newer engines

- Innovation pace slower than specialized TTS startups

User perspective: Teams already on AWS appreciate the reliability and operational simplicity, but those prioritizing voice quality in non-English markets should A/B test against boutique alternatives.

Open-Source and On-Device Picks

Cost equals compute here, and licensing varies. These options are great for privacy, latency control, and avoiding per-character API fees, but expect more engineering effort.

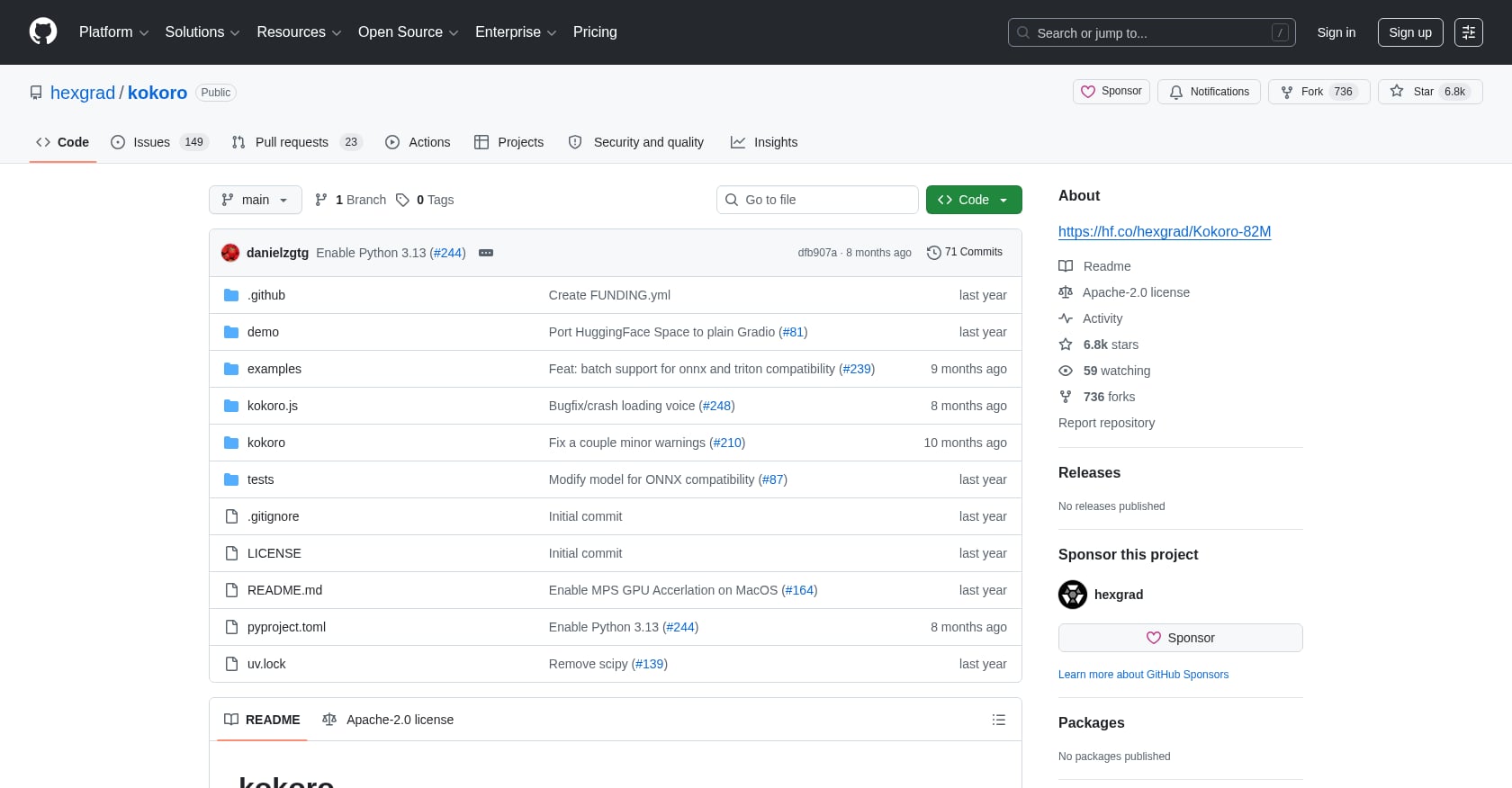

1. Kokoro TTS

Best for: Lightweight, on-device real-time synthesis with decent multilingual coverage.

Pricing: Open-weight. Your infrastructure cost only.

Key features:

- Runs natively on iOS, Android, and modest hardware

- Multilingual language packs available

- Fast inference speed relative to model size

- Active community extending capabilities

Tradeoffs:

- Chinese prosody sounds robotic to native ears, according to practitioners

- Fewer emotion and expressiveness controls than large commercial models

- Voice cloning exists as community add-ons but is not first-class in the base model

User perspective: Reddit users describe Kokoro as “the sweet spot for local real-time with decent multilingual,” but consistently flag limitations in tonal languages where prosody matters most.

2. Coqui XTTS-v2

Best for: Multilingual zero-shot voice cloning for hobbyists and advanced builders.

Pricing: Open-weight. Training and fine-tuning compute costs only.

Key features:

- Zero-shot voice cloning across supported languages

- Practical setup guides and community documentation

- Good quality in well-supported languages

- Active community with workarounds for edge cases

Tradeoffs:

- Not all languages are covered; attempting unsupported languages produces accented, unnatural output

- Accent bleed when forcing language tags outside the training set

- Requires meaningful ML engineering to fine-tune for production quality

User perspective: Builders using XTTS-v2 for audiobook and content projects report strong results in supported languages but warn against expecting it to generalize to languages outside its training data.

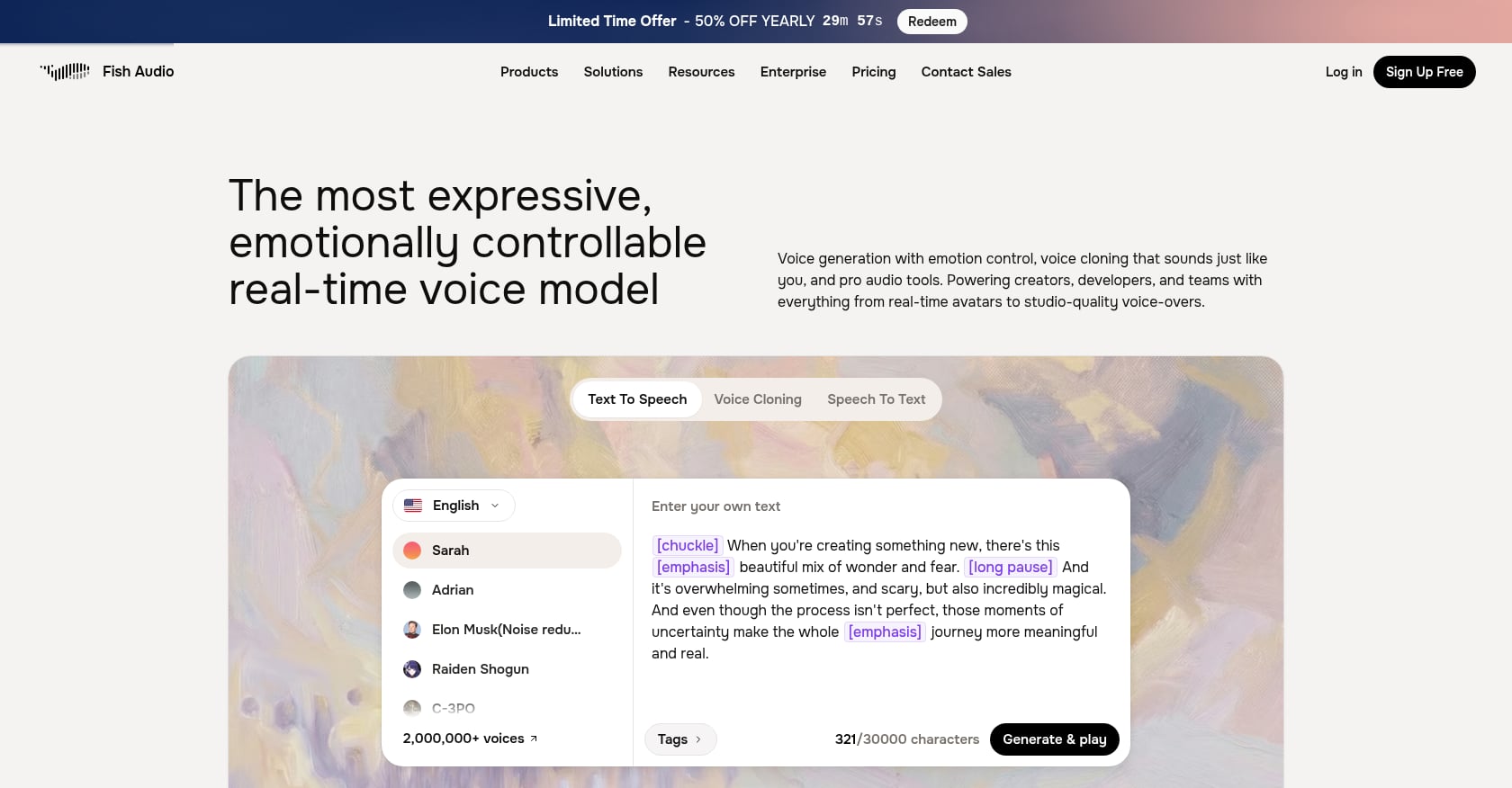

3. Fish Speech S2 / Qwen3-TTS / Mistral Voxtral / Hume TADA

Best for: Pushing the boundary of open multilingual TTS quality with emotion controls and rapid iteration.

Pricing: Open-weight or permissive licenses. Infrastructure costs only.

Key features:

- Fish Speech S2: natural emotion controls via text tags; competitive multilingual quality

- Mistral Voxtral: runs on ~3GB VRAM with 9 languages, dramatically changing on-device economics

- Qwen3-TTS: instruction-following synthesis with emerging multilingual support

- Hume TADA: token-aligned architecture for speed and stability in streaming

Tradeoffs:

- These are fast-moving projects; documentation and tooling maturity varies widely

- Verify licensing for commercial voice cloning use (each project differs)

- Quality benchmarks like MINT-Bench are days old; expect rapid metric churn through 2026

User perspective: Practitioners on forums describe this cluster as “the future of multilingual TTS on a budget,” noting that open models are closing the gap with commercial APIs faster than anyone expected. As one user put it, these models running on consumer hardware “change the economics for on-device.”

Code-Switching, Accents, and Dialect Pitfalls

This is where most multilingual TTS comparisons fall short, and where production deployments actually fail.

Code-switching is still broken on most engines. Mixing Hindi and English mid-sentence (common in Indian customer support), or Arabic and French (common in North African markets), trips nearly every commercial engine. The synthesis either drops into one language’s phoneme set or produces an uncanny hybrid that native speakers immediately reject.

Dialect matters more than language. Mexican Spanish and Castilian Spanish have different rhythms, vocabulary, and vowel qualities. Gulf Arabic and Levantine Arabic are practically different languages to native listeners. Most engines let you select a language, but dialect-level control is limited or nonexistent.

Phone-quality audio exposes everything. Benchmarks from studio recordings don’t predict telephony performance. Practitioners report meaningful drop-offs in narrowband audio, with streaming loops introducing artifacts that studio demos never show. Budget time for tuning punctuation and pauses. Something as simple as adding a comma before a number can materially improve perceived quality.

For teams deploying multilingual customer support agents or appointment scheduling flows, these issues are not edge cases. They are the daily reality.

Budget Scenarios: Real Math for Multilingual TTS at Scale

Forget vendor pricing pages for a moment. Here is what multilingual TTS actually costs when you run the numbers on 10,000 calls per month, assuming an average call generates roughly 3,000 characters of TTS output.

That is 30 million characters per month.

| Pricing Band | Cost per 1M Chars | Monthly TTS Cost (30M chars) |

|---|---|---|

| Budget ($7–15) | ~$10 | ~$300 |

| Mid ($30–40) | ~$35 | ~$1,050 |

| Premium ($100–200) | ~$150 | ~$4,500 |

The spread between budget and premium is 15x. At 50,000 calls per month, you are looking at $1,500 versus $22,500 in TTS costs alone. This is why one SaaS founder on Reddit described the TTS line item as “the biggest budget lever” in their voice agent stack.

The smart move: use a premium engine for languages where quality directly affects conversion (your primary market) and a mid-tier or open-source engine for secondary languages. You can track all of this with layered analytics and cost breakdowns that show spend per TTS provider, per language, per call.

Deployment Playbook: How to Orchestrate Multilingual TTS in Production

Here is the operational approach that works:

Pick 2 engines per target language. Run them as A/B options so you have a fallback if one vendor degrades or raises prices. For your highest-volume language, add an open-source fallback for cost spikes.

Route dynamically by quality and cost. Use an orchestration layer that can switch TTS providers per call based on language, caller region, or cost thresholds. SigmaMind AI’s model-agnostic platform does exactly this, supporting TTS providers like ElevenLabs, Rime, and Cartesia while giving developers control over nodes, tool calls, and stateful voice workflows.

Instrument TTFA and turn time. Measure time-to-first-audio at p50 and p95 in your actual telephony environment, not just API benchmarks. Track interruptions handling and barge-in recovery. These operational metrics matter more than MOS scores for voice agent quality.

Set up warm transfer with context. When a multilingual voice agent needs to escalate to a human, the handoff should include a summary and structured context so the human agent does not ask the caller to repeat everything. This is critical for multilingual deployments where the human agent may not speak the caller’s language. SigmaMind supports warm transfer with structured context on handoff so nothing gets lost in translation.

Build test scripts per language family. Create standardized scripts that stress-test names, dates, currency amounts, and domain jargon. Run them through your TTS engines and have native listeners rate them blind. This is the only reliable way to evaluate multilingual TTS quality for your specific use case.

You can build and test these flows using a no-code agent builder with branching and tool calls, then connect CRMs, helpdesks, and commerce platforms through an integration library so your voice agents actually complete tasks rather than just answering questions.

Voice Cloning Rights and Regulatory Landscape

This section matters more in 2026 than ever. U.S. policymakers are actively pressing on voice cloning consent requirements, and the regulatory direction is clear: explicit consent for voice cloning will become mandatory, not optional.

For multilingual deployments, this has specific implications:

- Consent language must be understandable to speakers of each target locale

- Data locality requirements vary by country; EU voices may need EU-hosted synthesis

- Open-source model licensing varies project to project; some prohibit commercial cloning use

- Vendor consent flows differ significantly; verify that your chosen engine’s consent process meets your jurisdiction’s requirements before deploying cloned voices

Treat voice rights as a non-negotiable checklist item, not a “we’ll figure it out later” problem.

FAQ

How many languages do multilingual TTS engines actually support well?

Most vendors claim 30 to 80+ languages, but effective support is tiered. Expect strong quality in about 9 to 12 Tier-1 languages (English, Spanish, French, German, Italian, Portuguese, Mandarin, Japanese, Korean). Tier-2 languages (Arabic, Hindi, Turkish, Dutch, Russian, Polish) are functional but uneven. Everything beyond that often regresses to accented output that native listeners will notice immediately. Always test with native speakers in your specific locales.

Does code-switching work in current multilingual TTS engines?

Poorly, in most cases. Mixing languages mid-sentence (like Hindi-English or Arabic-French) still trips nearly every engine as of April 2026. Practitioners on Reddit report that quality differences show up most with native listeners and phone-quality audio. If code-switching is critical for your use case, test it rigorously before committing.

What is a good time-to-first-audio (TTFA) target for voice agents?

For conversational voice agents, sub-500ms TTFA keeps interactions feeling natural. Sub-200ms is achievable with some engines in ideal conditions. But measure in your actual environment: telephony infrastructure, network latency, and the rest of your voice pipeline (STT, LLM processing) all add to the total turn time. Aim to keep total voice-to-voice latency under one second.

Can open-source multilingual TTS models replace commercial APIs?

For some use cases, yes. Models like Voxtral run on roughly 3GB of VRAM with 9 languages, and Kokoro handles on-device synthesis well in supported languages. The tradeoffs are engineering effort, uneven quality in tonal and Tier-2 languages, and licensing restrictions that vary by project. For high-volume production workloads where privacy or cost control matters, open-source models make excellent fallbacks alongside commercial primary engines.

How much does multilingual TTS cost at scale?

Pricing bands range from roughly $7 to $15 per million characters (budget), $30 to $40 (mid-tier), and $100 to $200+ (premium). At 10,000 calls per month generating ~30 million characters, that is $300 to $4,500 per month in TTS costs alone. The TTS line item is often the single largest variable cost in a voice agent stack. Plan your per-layer costs before committing to any engine.

What SSML features matter most for multilingual TTS?

Numeric reading rules (dates, currencies, phone numbers), pause control, and phoneme overrides are the highest-impact SSML features for multilingual deployments. Arabic numerals read differently depending on context. Japanese honorifics need specific pronunciation. Hindi-English mixed sentences need explicit language switching tags. If your engine does not support granular SSML in your target language, your output will sound wrong in ways that erode caller trust.

Should I use one TTS provider or multiple?

Multiple. The quality and cost differences between engines vary by language, and vendors update models frequently (sometimes improving, sometimes regressing). The rule of thumb for 2026: pick two engines per target language as A/B options, add one open-source fallback for cost spikes, and use an orchestration platform that lets you route dynamically. Sign up for SigmaMind to A/B test multilingual TTS engines across your actual call flows and swap providers without re-architecting.

How do I evaluate multilingual TTS quality fairly?

Build a test script per language family that includes names, dates, currency amounts, domain-specific terms, and at least one code-switching sentence. Run it through your candidate engines. Have native listeners rate the output blind, on a 1 to 5 scale for naturalness, intelligibility, and accent appropriateness. Do this over phone-quality audio, not studio playback. New evaluation benchmarks like MINT-Bench are emerging, but nothing replaces domain-specific native-listener testing for your actual use case.